ChatGPT 5.5 Just Changed AI Workflow Automation Forever

OpenAI released GPT-5.5 on April 23, 2026. The headline is bigger benchmark scores, but the real story is more practical: AI is moving from chat responses to real work execution. For teams building with AI agents, multi-model AI, live contexts, and workflow automation, this is an important shift.

I try not to overreact to every new model launch.

At this point, the AI world gets a new “best model” every few weeks. One model is better at coding. Another is better at long contexts. Another is faster or cheaper. If you run a team, it can feel impossible to know what actually matters.

GPT-5.5 is different enough that I think it is worth paying attention.

Not because every company should immediately rebuild everything around it. That is usually the wrong reaction. The more important point is what GPT-5.5 tells us about where AI is going.

We are moving past the chatbot era.

The next phase is about AI agents that can take a goal, break it into steps, use tools, recover when something goes wrong, and complete real work. That is the part I care about most, because it is exactly where AI workflow automation becomes useful for teams.

GPT-5.5 Is Built for Work, Not Just Answers#

Most people still think of AI as a place where you type a prompt and get a response.

That was the original mental model. Ask a question. Get an answer. Rewrite an email. Summarize a document. Generate a few ideas.

That is still useful, but it is not where the biggest value is going to come from.

GPT-5.5 points toward a different model: give AI a job, not just a prompt.

The model has been described as a more agentic release, which means it is better suited for tasks that require multiple steps. Instead of only producing one polished response, it can reason through a sequence, make decisions, use tools, and keep moving toward an outcome.

That matters for real teams because most business work is not one step.

A sales workflow is not just “write an email.” It might involve checking CRM notes, reading the last meeting transcript, understanding the account, drafting a follow-up, scheduling a reminder, and updating the pipeline.

An engineering workflow is not just “write code.” It might involve finding the bug, reading related files, proposing a fix, running tests, and explaining the change.

A marketing workflow is not just “write a blog.” It might involve researching the topic, checking the keyword plan, drafting the article, creating image prompts, preparing metadata, and publishing it through the right process.

That is where GPT-5.5 becomes interesting. It is not just better at text. It is better aligned with the way real work happens.

The Benchmarks Are Strong, But the Direction Matters More#

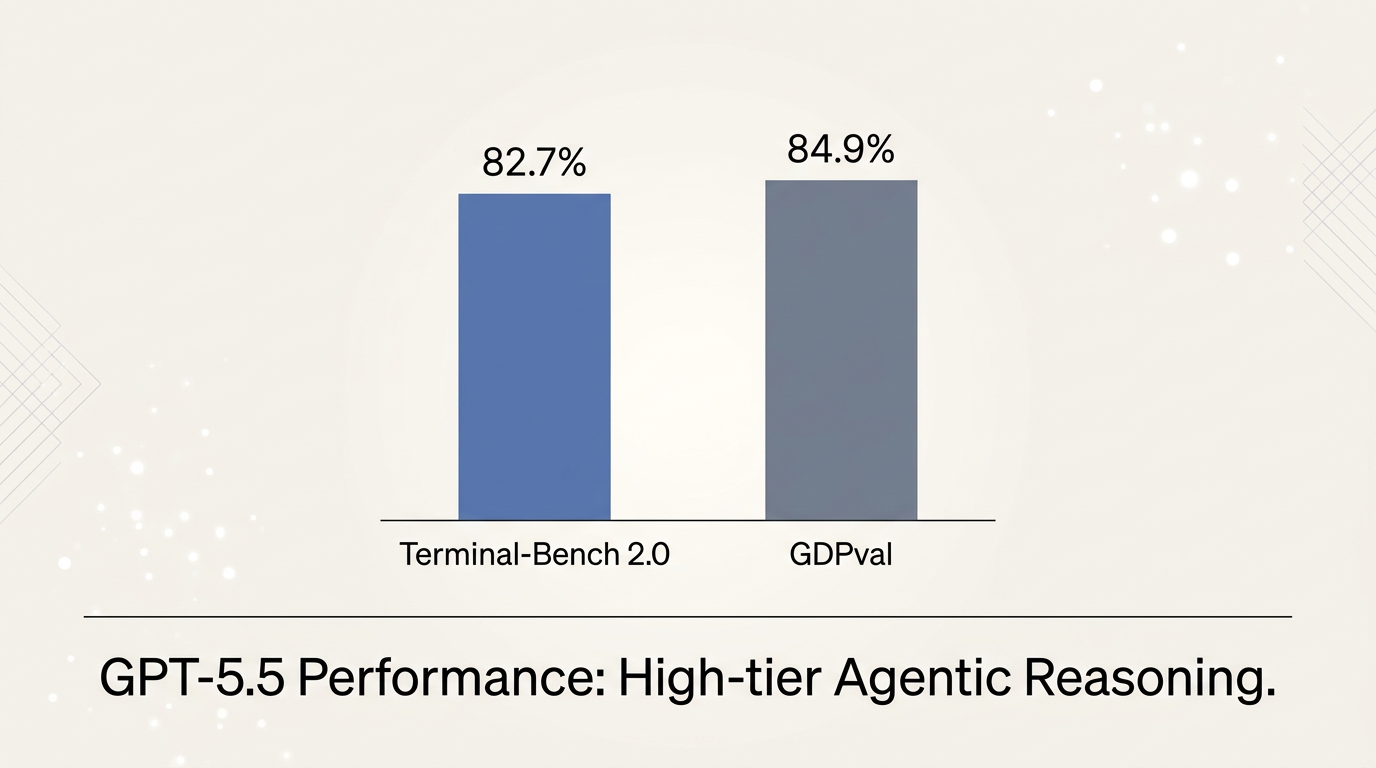

The headline numbers are impressive. GPT-5.5 has been reported at 82.7% on Terminal-Bench 2.0 and 84.9% on GDPval.

Those are not random vanity metrics.

Terminal-Bench is especially useful because it tests the model inside a real software environment. The model has to operate in a terminal, work with files, handle errors, and complete tasks that look much closer to actual development work.

That is very different from asking a model to answer a trivia question or write a clean code snippet in isolation.

Why does this matter?

Because production AI is messy. Real workflows include missing context, unclear instructions, broken tools, inconsistent data, and edge cases. A model that performs well in a realistic environment is more useful than a model that only sounds smart in a chat window.

Still, I would not build a strategy around one benchmark table.

Models will keep changing. GPT-5.5 will eventually be replaced by something better. Claude, Gemini, DeepSeek, Llama, Grok, and other models will keep improving too.

The bigger lesson is that frontier models are becoming capable enough to own larger parts of a workflow. The gap is no longer just model intelligence. The gap is whether your company has the right system around the model.

Minimal chart showing GPT-5.5 benchmark scores on Terminal-Bench 2.0 and GDPval.

Minimal chart showing GPT-5.5 benchmark scores on Terminal-Bench 2.0 and GDPval.

Why This Matters for AI Agents#

AI agents are becoming one of the most important categories in software.

But there is a big difference between a demo agent and a useful agent.

A demo agent looks impressive for five minutes. It opens a browser, clicks a few things, writes a summary, and maybe completes a simple task.

A useful agent can operate inside your actual business process. It knows where the right information lives. It understands the steps your team follows. It can use your tools. It can ask for approval when needed. It can leave a clear trail of what happened.

That is where GPT-5.5 fits into the bigger picture.

Better models make agents more reliable, but reliability does not come from the model alone. It also comes from context, tool access, workflow design, and repeatability.

This is why I think AI agents and AI workflow automation need to be discussed together. An agent without a workflow is just a clever assistant. A workflow without intelligence is just old automation with a new label.

The real value appears when the two come together.

The Role of Multi-Model AI#

One mistake I see teams make is assuming the newest model should be used for everything.

That is usually expensive and unnecessary.

In a real AI workflow, different tasks need different models. Some steps need deep reasoning. Some need speed. Some need long context. Some need image understanding. Some just need a clean rewrite or a structured summary.

That is why multi-model AI is becoming practical, not just nice to have.

GPT-5.5 might be the right model for complex reasoning, agent planning, coding tasks, and high-stakes decisions. But smaller or cheaper models may be better for formatting, classification, simple summaries, or high-volume background tasks.

The future is not one model doing everything.

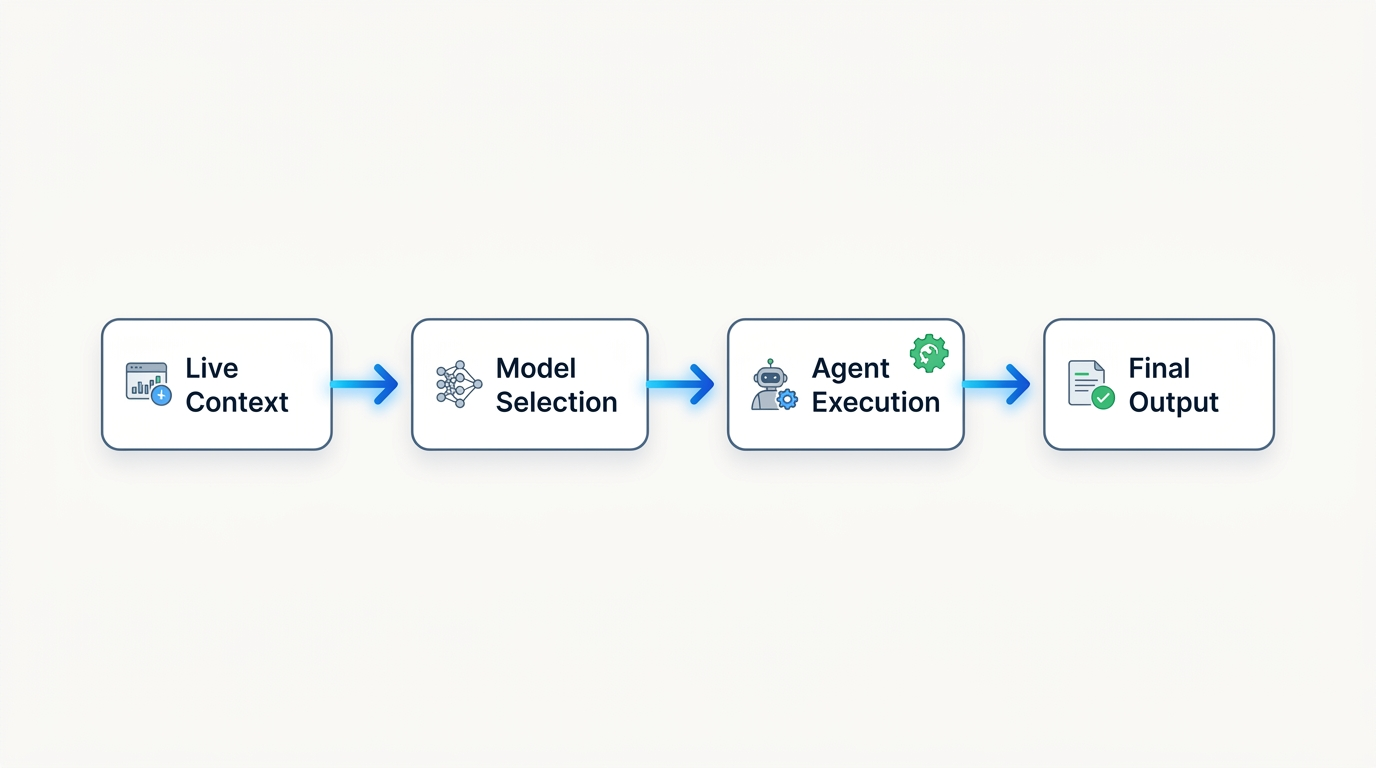

The future is a workflow AI platform where the right model is used for the right step, with shared context connecting the entire process.

At Springbase, this is one of the ideas we care about most. Teams should not be locked into one model provider or one style of AI. The model landscape changes too quickly. What matters is building workflows that can adapt as better models arrive.

Context Is the Real Multiplier#

A more powerful model is useful, but it still needs the right information.

This is where many AI projects break down.

A team buys access to a great model, but the model does not know their documents, their meetings, their customers, their codebase, or their internal decisions. So employees spend their time copying and pasting context into prompts.

That does not scale.

For AI agents to become truly useful, they need an AI knowledge base behind them. They need access to the company’s actual knowledge, not just the public internet. They need meeting notes, PDFs, docs, Slack threads, GitHub issues, CRM data, and whatever else matters to the work.

Even better, that context should stay fresh.

That is why live contexts are so important. A static upload helps, but many businesses change every day. Projects move. Customers reply. Bugs get fixed. Meetings happen. Decisions get updated.

If the AI is working from old information, the workflow becomes fragile.

The best AI workflow automation systems will combine strong models like GPT-5.5 with live, grounded company context. That is when agents stop feeling like generic assistants and start feeling like teammates who understand the work.

Minimal diagram showing live context, multi-model AI selection, agent execution, and workflow output.

Minimal diagram showing live context, multi-model AI selection, agent execution, and workflow output.

What Teams Should Do Now#

I would not tell every team to drop everything and rebuild around GPT-5.5.

I would tell teams to ask better questions.

Do you have workflows that repeat every week?

Do your people spend time moving information between tools?

Do employees copy the same context into AI chats over and over?

Do you have meeting notes, docs, and project updates that should be searchable and usable by AI?

Do you have processes that could become AI recipes so the whole team can run them consistently?

If the answer is yes, then GPT-5.5 is a signal that now is the time to design more serious AI workflows.

Start with one process. Pick something repetitive but valuable. For example, weekly competitor research, sales call follow-ups, engineering bug triage, support summaries, or internal reporting.

Then map the workflow clearly.

What information is needed? Which tools are involved? Which steps require reasoning? Which steps are simple formatting? Where should a human approve the output?

Once that is clear, the model choice becomes easier. GPT-5.5 can sit in the parts of the workflow that need judgment and multi-step reasoning. Other models can handle lighter work. Your knowledge base provides grounding. Your integrations provide action. Your recipes make the process repeatable.

That is how AI moves from novelty to infrastructure.

The Bigger Shift#

GPT-5.5 is not just another model release. It is a sign that the industry is getting more serious about delegation.

For years, AI helped us produce content faster. Now it is starting to help us complete work faster.

That shift changes how teams should think.

The winning companies will not be the ones that try every model once. They will be the ones that build systems where better models can immediately create more value.

That means investing in AI workflow automation, AI agents, multi-model AI, AI knowledge bases, live contexts, and connected app integrations.

Models will keep improving. That part is almost guaranteed.

The real question is whether your workflows are ready for them.

At Springbase, this is the future we are building toward: one workspace where teams can use the best models, connect their knowledge, turn repeated work into recipes, and let agents take action across the tools they already use.

With GPT-5.5 now available in Springbase, that future feels even closer.

And for teams paying attention, it is a good moment to start building for it.

Explore Springbase: ++See how Springbase handles AI workflow automation++

Get started: ++springbase.ai++

Related Posts

Meta Muse Spark Brings Personal AI Into WhatsApp, Instagram, and Messenger. Here's What That Changes

Meta just launched Muse Spark, its most powerful AI model yet, and its first built under the newly formed Meta Superintelligence Labs. The announcement is bigger than a benchmark release. It signals a fundamental shift in Meta's AI strategy, a new chapter in the frontier model race, and a direct challenge to how the rest of the industry thinks about personal AI. Here is what it means and why it matters to anyone building serious workflows with AI today.

Claude Mythos and the Zero-Day Race: What It Means for AI Security Workflows

Anthropic’s Claude Mythos preview has sparked one of the biggest AI cybersecurity conversations of the year. The headline claim is huge: a frontier model surfaced thousands of zero-day vulnerabilities. That matters because it changes how teams think about live operational context.

Gemma 4 Is Here: What Google's New Open-Weights Model Means for AI Workflows

Google's April 2, 2026 launch of Gemma 4 is one of the more important AI releases of the year so far. Built from Gemini technology and released as an open-weights model family, Gemma 4 gives developers a new way to think about multimodal AI, agentic workflows, and deployable AI automation. Every week seems to bring another AI announcement, but not every launch actually changes the conversation. Gemma 4 feels different because Google is not just releasing another model endpoint. It is taking Gemini-derived research and packaging it into an open-weights family that developers can inspect, adapt, and deploy with far more flexibility than a typical closed API model. Released on April 2, 2026, Gemma 4 arrives at a time when the AI market is moving beyond chatbot novelty and into real AI workflow automation. Teams are thinking more seriously about multi-model AI, AI agents, knowledge bases, and how to run AI closer to their data, products, and users. That is exactly why this release matters beyond Google's own ecosystem. My take: Gemma 4 is not interesting only because it comes from Google. It is interesting because it points to the next phase of AI adoption: models that are not just powerful, but also more adaptable, more deployable, and more useful inside real workflows.